Security, Compliance, and Governance for AI on AWS: A Developer's Guide

March 28, 2026

AI systems are different from traditional software. They process sensitive personal data, make high-stakes decisions, adapt and change after deployment, and exhibit emergent behaviors no one anticipated. The same compliance checklist you ran for your REST API is not going to cut it for a model fine-tuned on customer health records.

This post covers the full security, compliance, and governance picture for AI systems built on AWS. It maps to Domain 5 of the AWS AI Practitioner (AIF-C01) exam and is structured as a reference for developers building production AI workloads.

Security, Governance, and Compliance: Three Distinct Functions

These three terms get used interchangeably but they mean different things and exist for different reasons:

Security ensures the confidentiality, integrity, and availability of your data, infrastructure, and information assets. It answers: "Is our system protected from threats?"

Governance ensures the organization can add value and manage risk in its operations. It answers: "Are we making the right decisions with AI, and do we have oversight?"

Compliance ensures adherence to external and internal requirements (regulations, standards, policies). It answers: "Are we meeting the rules we are required to follow?"

All three are required. A system can be technically secure but poorly governed (no human oversight, no bias checks). It can be compliant on paper but vulnerable to prompt injection. They are complementary layers, not synonyms.

Defense in Depth for AI on AWS

Defense in depth is the core security paradigm for building on AWS. The idea is layered redundancy: if one control fails or is compromised, other layers are in place to detect, isolate, and limit damage.

Applied to AI workloads, defense in depth uses seven layers, from the innermost to the outermost:

1. Data Protection

Encrypt all data at rest using AWS Key Management Service (AWS KMS) or customer managed keys. Protect data in transit using AWS Certificate Manager (ACM) and AWS Private Certificate Authority. Keep data within private networks using AWS PrivateLink, and version your models and datasets with Amazon S3 versioning.

2. Identity and Access Management

Only authorized users, applications, and services should be able to interact with your AI infrastructure. Core service: AWS Identity and Access Management (IAM). Apply least privilege everywhere.

3. Application Protection

Defend against unauthorized access, data breaches, and denial-of-service attacks at the application layer. Key services: AWS Shield, Amazon Cognito.

4. Network and Edge Protection

Protect your network boundaries and prevent unauthorized access to cloud-based resources. Key services: Amazon VPC, AWS WAF.

5. Infrastructure Protection

Secure the underlying infrastructure against unauthorized access, data breaches, and system failures. Key services: AWS IAM user groups, network access control lists (network ACLs).

6. Threat Detection and Incident Response

Detect and respond to threats in real time. Threat detection services: Amazon GuardDuty, AWS Security Hub. Incident response services: AWS Lambda, Amazon EventBridge (for automated playbooks).

7. Policies, Procedures, and Awareness

The outermost layer is organizational. Implement a least privilege policy. Use AWS IAM Access Analyzer to identify overly permissive accounts and roles. Restrict access using short-term credentials.

Why AI Compliance Is Different from Traditional Software Compliance

Traditional software compliance assumes you can audit the code, understand the decision logic, and apply static standards. AI breaks each of those assumptions:

Complexity and opacity. LLMs and generative AI models make decisions through billions of parameters. You cannot trace an output back to a single rule or function. This makes traditional audit processes difficult to apply.

Dynamism. AI systems can change behavior over time, even after deployment (through fine-tuning, feedback loops, or drift). Static compliance checklists were not designed for systems that evolve.

Emergent capabilities. AI systems sometimes exhibit capabilities that were not programmed and were not anticipated during development. Regulations written before deployment cannot account for behaviors that only appear afterward.

Unique risks. Algorithmic bias, privacy violations, misinformation, and workforce displacement are AI-specific risks that traditional software compliance frameworks do not address. Algorithmic bias can come from unrepresentative training data or from the assumptions built in by developers during the design process.

Algorithm accountability. Because AI outputs can significantly impact individuals (credit decisions, medical diagnoses, hiring), regulators are introducing laws that require transparency, explainability, and human oversight. Examples: the EU AI Act and New York City's Automated Decision Systems Law.

AWS Compliance Standards You Need to Know

AWS supports 143 security standards and compliance certifications. The ones most relevant to AI workloads:

NIST 800-53 is the standard for U.S. federal information systems, requiring formal evaluation of safeguards for confidentiality, integrity, and availability.

ISO/IEC 27001 outlines recommended security management practices based on the ISO/IEC 27002 document. Widely used internationally.

SOC Reports are independent third-party assessments of AWS controls and compliance objectives.

HIPAA applies if your AI system processes protected health information (PHI). AWS has a HIPAA-eligible environment and supports covered entities.

GDPR applies if you process data belonging to EU citizens. It establishes strict requirements for data protection, consent, and breach notification.

PCI DSS applies if your AI system processes payment card data. Managed by the PCI Security Standards Council.

A workload is considered regulated when it must meet one or more of these frameworks, or when its outputs constitute a legal record, involve safety-critical decisions, or have significant liability implications (HR decisions, mortgage approvals, FDA reporting, safety systems).

The GenAI Security Scoping Matrix

Not all AI systems have the same security scope. AWS defines five scopes based on how much of the AI stack your organization owns and controls:

Scope 1: Consumer App. You use a public third-party generative AI service via API (ChatGPT, Claude.ai, etc.) according to the provider's terms. You don't own or see the training data or model.

Scope 2: Enterprise App. You use a third-party enterprise application with embedded generative AI features under a formal business relationship.

Scope 3: Pre-trained Models. You build your own application by integrating a third-party foundation model (FM) through an API. Example: a customer support chatbot built on Claude through Amazon Bedrock.

Scope 4: Fine-tuned Models. You take a third-party FM and fine-tune it with your own data to produce a specialized model. You control the fine-tuning data, but the base model's training data belongs to the provider.

Scope 5: Self-trained Models. You build and train a model from scratch using data you own. You control everything.

Security responsibility increases significantly as you move from Scope 1 to Scope 5. The matrix maps four security disciplines across all five scopes: Governance and Compliance, Legal and Privacy, Risk Management, and Controls and Resilience.

OWASP Top 10 for LLMs

The Open Web Application Security Project (OWASP) published a dedicated Top 10 list for LLM applications. These are the vulnerabilities you need to design against when building GenAI systems:

Prompt injection - Malicious inputs that manipulate the model's behavior or override system instructions.

Insecure output handling - Failure to sanitize model outputs before passing them to other systems or displaying them to users.

Training data poisoning - Introducing malicious data into training sets so the model learns harmful behaviors.

Model denial of service - Exploiting model architecture vulnerabilities to disrupt availability.

Supply chain vulnerabilities - Weaknesses in the software, hardware, or services used to build or deploy the model.

Sensitive information disclosure - Leakage of sensitive data through model outputs (the model may have memorized training data).

Insecure plugin design - Flaws in optional model components (tools, plugins, function calls) that can be exploited.

Excessive agency - Granting the model too much autonomy or capability, leading to unintended actions.

Overreliance - Over-trusting model outputs without proper auditing or human oversight.

Model theft - Unauthorized copying or replication of model parameters or architecture.

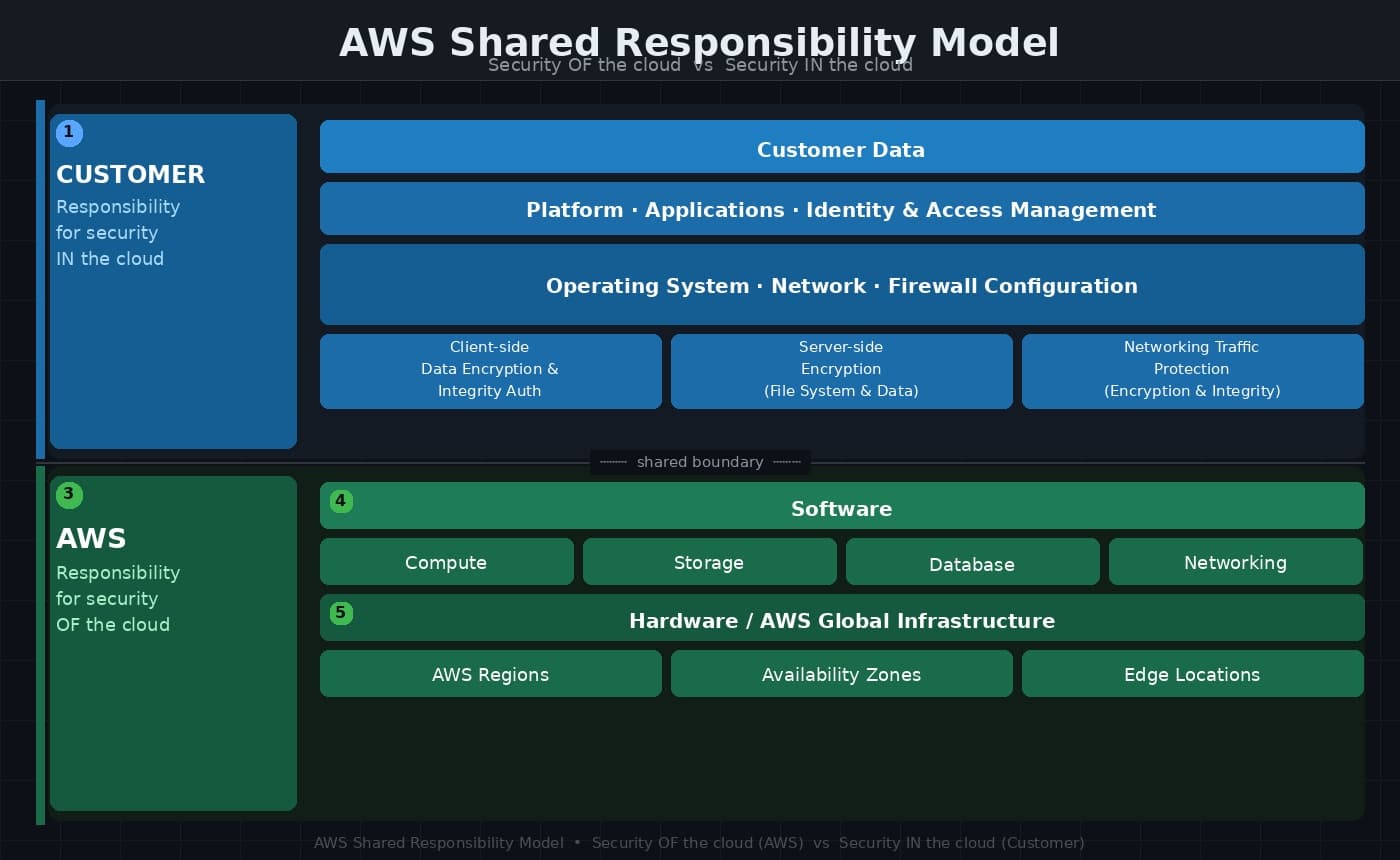

The AWS Shared Responsibility Model

Security and compliance on AWS is a shared responsibility. The boundary is described as: security *of* the cloud (AWS's job) versus security *in* the cloud (your job).

AWS is responsible for:

Hardware and AWS Global Infrastructure (Regions, Availability Zones, edge locations)

Software layer: compute, storage, database, and networking services

You (the customer) are responsible for:

Customer data

Platform, applications, and identity and access management

Operating system, network, and firewall configuration

Client-side data encryption and data integrity authentication

Server-side encryption (file system and data)

Networking traffic protection (encryption, integrity, identity)

For AI workloads, this means you own the security of your training data, your fine-tuned models, your application logic, and your IAM configuration. AWS secures the infrastructure underneath.

Key AWS Services for Securing AI Systems

Four Foundational Security Services

These four services are recommended for every workload, regardless of industry or scale:

AWS Security Hub provides a single dashboard for all security findings and supports automated response playbooks.

AWS KMS encrypts data and gives you the choice between AWS managed keys and customer managed keys.

Amazon GuardDuty is a threat detection service that monitors for suspicious activity and unauthorized behavior across your AWS accounts, workloads, and data.

AWS Shield Advanced protects against DDoS events and includes AWS WAF and AWS Firewall Manager.

Additional Services for AI-Specific Needs

Amazon Macie uses ML to automatically discover sensitive data (PII, PHI, financial information) in S3 buckets. Use it before training or fine-tuning a model to identify data that should be removed or additionally protected.

AWS IAM and IAM Identity Center let you implement fine-grained, least-privilege access controls for your models, data, and inference endpoints. Amazon SageMaker AI Role Manager provides three preconfigured role personas: data scientist, MLOps, and SageMaker AI compute.

Amazon VPC and AWS PrivateLink establish private connectivity to Amazon Bedrock and other AWS services without exposing traffic to the public internet.

Amazon Inspector performs continuous vulnerability scanning of EC2 instances, container images, and Lambda functions.

Amazon Detective supports forensic investigation by analyzing and visualizing security findings over time.

AWS WAF Bot Control protects generative AI web applications from scrapers, scanners, and crawlers that can skew model metrics, consume excess compute, or disrupt availability.

Data Governance Strategies for AI

Data governance for AI covers the full lifecycle of data: collection, storage, usage, and disposal. Six core strategies:

Data quality and integrity. Define quality standards (completeness, accuracy, timeliness, consistency) and implement validation at each stage of the pipeline. Maintain data lineage to track where data came from and how it was transformed.

Data protection and privacy. Enforce access controls and encryption. Develop data breach response procedures. Apply privacy-enhancing technologies like data masking, tokenization, and differential privacy.

Data lifecycle management. Classify data by sensitivity and criticality. Set retention and disposition policies. Back up data for resilience and business continuity.

Responsible AI. Establish frameworks to monitor models for bias and fairness issues. Document limitations and intended use cases. Train development teams on responsible AI practices.

Governance structures and roles. Create a data governance council. Define roles: data stewards (responsible for day-to-day quality), data owners (accountable for the asset), and data custodians (responsible for technical storage and security).

Data sharing and collaboration. Establish data sharing agreements. Use data virtualization or federation for access to distributed sources without compromising ownership.

Key data governance concepts to know for the AIF-C01 exam:

Data lineage: the full history of how data moved and was transformed across systems

Data residency: where data is physically stored, which affects regulatory compliance

Data drift: when the distribution of input data changes over time, degrading model performance

Data logging: systematic recording of inputs, outputs, and model performance metrics for debugging and auditing

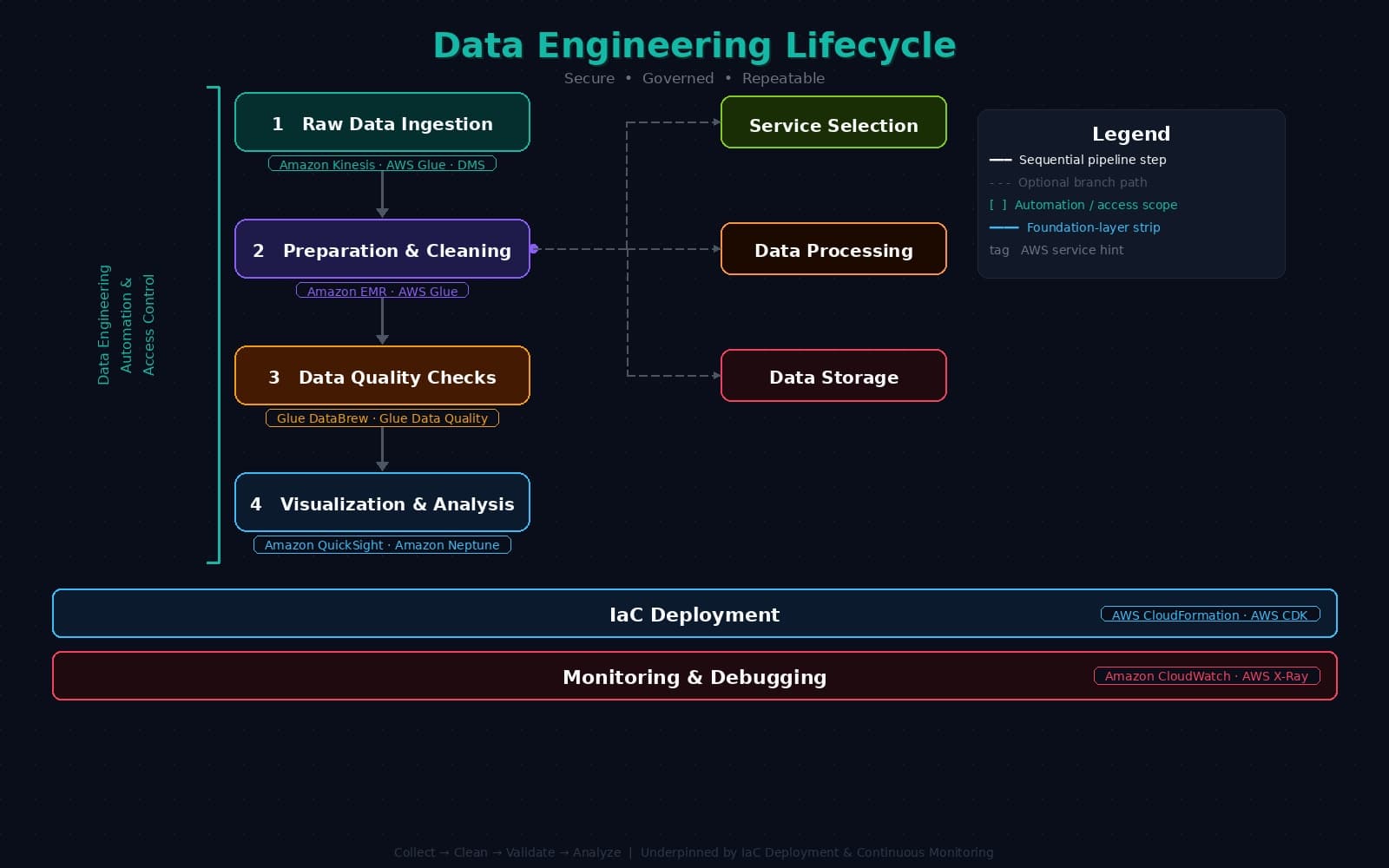

The Data Engineering Lifecycle

The data engineering lifecycle describes how data moves from raw ingestion to trained model. It has seven stages. Four are sequential; two are foundational and run throughout.

Stage 1 (Foundation): Automation and Access Control

Every data pipeline needs automation: a start action, connecting steps, and a mechanism to separate failed and passed stages. Log failures without halting the rest of the ETL process.

AWS tool: AWS Glue workflows.

Stage 2: Data Collection (Raw Ingestion)

Ingest raw data from various sources (streaming, batch, databases).

AWS tools: Amazon Kinesis, AWS Database Migration Service (DMS), AWS Glue.

Stage 3: Data Preparation and Cleaning

The most time-consuming stage. Normalize, deduplicate, handle missing values, and structure data for training.

AWS tools: Amazon EMR, AWS Glue.

Stage 4: Data Quality Checks

Validate that cleaned data meets the quality standards defined in your governance framework.

AWS tools: AWS Glue DataBrew, AWS Glue Data Quality.

Stage 5: Data Visualization and Analysis

Explore patterns, validate assumptions, and identify anomalies before training. This maps to Exploratory Data Analysis (EDA).

AWS tools: Amazon QuickSight (charts/graphs), Amazon Neptune (graph database visualization).

Stage 6: IaC Deployment

Any deployed infrastructure should be backed by code. Treat your pipeline infrastructure the same way you treat application infrastructure.

AWS tool: AWS CloudFormation (and AWS CDK, which generates CloudFormation).

Stage 7 (Foundation): Monitoring and Debugging

Not a sequential step. Monitoring runs throughout the entire lifecycle, tracking correctness, performance, and data drift.

AWS tool: Amazon CloudWatch.

Data and Model Lineage

Data and model lineage refer to the detailed record of where your data and models came from, how they were transformed, and how they evolved over time. This is critical for reproducing results, auditing compliance, and understanding the limits of your models.

Three core tools for documenting lineage:

Data lineage tracking documents the journey of training data from its initial sources through to the final model. It provides traceability and reproducibility for audits.

Cataloging organizes datasets, models, and resources into a centralized repository with metadata about sources, licenses, and transformations.

Model Cards are a standardized format for documenting what a model does, how it was trained, its performance characteristics, and its known limitations. Amazon SageMaker AI Model Cards lets you create and manage model cards directly within SageMaker AI, cataloging intended use, risk rating, training details, evaluation results, and custom notes. They are useful for audit activities and for communicating model intent to stakeholders.

Practical Takeaways

Security, compliance, and governance for AI is a cross-disciplinary problem. It sits at the intersection of infrastructure security, data engineering, legal compliance, and responsible AI. A few things that are worth remembering:

Security, governance, and compliance solve different problems. Do not conflate them.

Defense in depth means you do not rely on any single control. If your IAM configuration is perfect but your S3 bucket encryption is missing, you still have a vulnerability.

The AWS Shared Responsibility Model means AWS protects the infrastructure. The security of your data, applications, and access controls is your responsibility.

The GenAI Scoping Matrix determines how much of the AI stack you own and therefore how much security responsibility sits with you. Scope 1 (consumer app) requires much less security investment than Scope 5 (self-trained model).

OWASP Top 10 for LLMs is the practical threat model for generative AI applications. Prompt injection and excessive agency are the two most common issues in production agentic systems.

Model cards are not just documentation. They are a governance artifact.

Data quality is a security concern. Biased or poisoned training data produces biased or manipulated models.

References

Architect Defense-in-Depth Security for Generative AI Applications (AWS Blog)

Governance Perspective: Managing an AI-Driven Organization (AWS Whitepaper)

Securing Generative AI: Applying Relevant Security Controls (AWS Security Blog)

MITRE ATLAS: Adversarial Threat Landscape for Artificial-Intelligence Systems

AWS Cloud Adoption Framework: Security Perspective (AWS Whitepaper)