How to Build a Generative AI Application: The Full Lifecycle on AWS

March 27, 2026

Most developers treat generative AI as a black box: pick a model, write a prompt, ship it. That works for demos. It does not work for production applications that need to be reliable, cost-effective, and actually solve the business problem they were designed for.

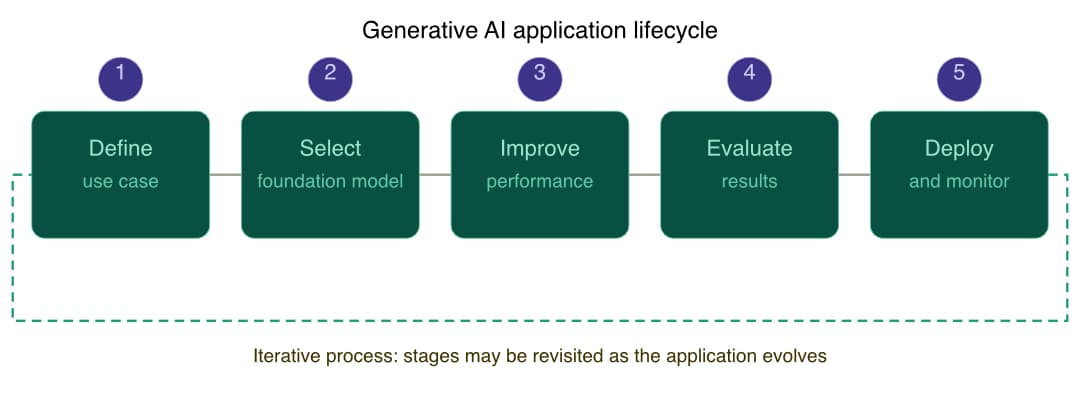

The AWS AI Practitioner course "Developing Generative AI Solutions" gives a structured framework for thinking about this: a five-stage lifecycle that treats AI application development as an engineering discipline, not a prompt-guessing game.

Here is a breakdown of every stage, with the decisions that actually matter at each one.

The Generative AI Application Lifecycle

Building a generative AI application is an iterative process. You will not go through these five stages once and be done. You will revisit earlier stages as user needs change, as better models become available, and as your production data reveals gaps in your initial design.

The five stages are:

Define a use case

Select a foundation model

Improve performance

Evaluate results

Deploy and monitor

Stage 1: Define a Use Case

This is the stage most developers rush through. Do not.

A poorly defined use case leads to the wrong model choice, the wrong evaluation metrics, and a final product that passes all your tests but fails the people who use it.

A well-defined use case is not "build a chatbot." It is a structured document with:

A short, descriptive name

A high-level purpose statement

The actors who interact with the system (humans, external APIs, downstream services)

Preconditions (what must be true before the use case runs)

The main success flow, step by step

Alternative flows for errors and edge cases

Postconditions (what state the system should be in after success)

Business rules, nonfunctional requirements, and assumptions

When applying generative AI to a business problem, the metrics that typically measure success are: cost savings, time savings, quality improvement, customer satisfaction, and productivity gains. Knowing which of these you are optimizing for changes every decision downstream.

The five main approaches generative AI takes to business problems are: process automation, augmented decision-making, personalization, creative content generation, and exploratory analysis. Picking the right approach early keeps the scope manageable.

Stage 2: Select a Foundation Model

Once the use case is defined, you select a foundation model. The two options are: use a pre-trained model or train from scratch. For the vast majority of real applications, you use a pre-trained model.

The criteria for selecting a pre-trained model are:

Cost: licensing fees, inference compute, and customization costs

Modality: text, image, audio, or multimodal

Latency: real-time vs. batch requirements

Multi-lingual support: if the application needs it

Model size and complexity: larger models perform better on complex tasks but cost more to run

Customization: can it be fine-tuned on your domain data?

Input/output length: context window matters for long-form content or document processing

Responsibility: bias, misinformation risks, training data provenance

Deployment and integration: compatibility with your existing infrastructure

Amazon Bedrock gives you access to models from multiple providers without managing the infrastructure: AI21 Labs (Jurassic-2 for text), Amazon Titan (text, embeddings, images), Anthropic Claude 3 (text and vision), Cohere Command (text and summarization), Meta Llama 3 (open weights, variable-length generation), Mistral Large (complex reasoning and code), and Stability AI Stable Diffusion (image generation).

Each model has different strengths. Claude 3 has three tiers (Haiku, Sonnet, Opus) so you can match intelligence level and cost to the task. Stable Diffusion handles image generation. Titan handles embeddings if you are building a RAG pipeline.

Stage 3: Improve Performance

A pre-trained model out of the box rarely gives you exactly what you need. This stage is about closing the gap between "what the model knows" and "what your application requires."

There are four main techniques, and they are not mutually exclusive.

Prompt Engineering

The fastest and cheapest approach. Prompt engineering is the practice of crafting input instructions to guide the model toward the output you want. Done well, it can eliminate the need for fine-tuning entirely.

Key aspects: clear and context-rich design, augmentation with examples or constraints, iterative tuning, ensembling multiple prompts, and mining effective patterns from libraries.

Common techniques: zero-shot, few-shot, chain-of-thought (CoT), self-consistency, tree of thoughts (ToT), ReAct, and Automatic Reasoning and Tool-use (ART).

Retrieval Augmented Generation (RAG)

RAG gives the model access to external knowledge at inference time without retraining it. It combines two components: a retrieval system (which finds relevant documents from a knowledge base using sparse or dense retrieval) and a generative model (which uses those documents plus its own language generation to produce a response).

RAG is the right choice when your application needs to answer questions about proprietary or frequently updated information that the base model was never trained on.

Common applications: customer service chatbots backed by product documentation, legal research tools backed by case law, healthcare assistants backed by clinical guidelines.

Amazon Bedrock's Knowledge Bases feature handles the RAG pipeline for you, including vector indexing and retrieval.

Fine-Tuning

Fine-tuning further trains a pre-trained model on a task-specific dataset. Use it when prompt engineering has hit its ceiling and you have labeled domain data to work with.

Two approaches: instruction fine-tuning (training the model to follow specific instructions, including prompt tuning as a variant) and RLHF (reinforcement learning from human feedback, which aligns the model with human preferences).

The five steps: start with a pre-trained model, prepare a task-specific dataset, add task-specific layers, fine-tune on that dataset, then evaluate and iterate.

Agents for Multi-Step Tasks

Agents break complex processes into smaller, orchestrated steps. They handle task coordination, logging, concurrency, and integration with external systems via APIs or message queues.

Amazon Bedrock Agents can provision cloud resources, deploy services, automate operational tasks, and monitor infrastructure. This is the pattern to reach for when the work involves sequences of dependent actions that a single prompt cannot complete.

The Cost vs. Accuracy Trade-Off

The customization options form a pyramid:

RAG with generic FMs: used by the majority of applications. Lowest complexity, lowest cost, general purpose.

Fine-tuned FMs: moderate complexity and cost, more specialized.

FM from scratch: highest complexity, highest cost, fully purpose-built. Reserved for research or highly specialized applications.

Start with RAG. Only move up the pyramid if the results are not good enough and you have the data and budget to justify it.

Stage 4: Evaluate Results

Evaluation is where most teams cut corners and then wonder why the application underperforms in production. There are three approaches, and they each catch different problems.

Human Evaluation

The gold standard. Human evaluators rate model outputs on coherence, relevance, factuality, and quality. Expensive and slow, but necessary for catching problems that automated metrics miss, especially in open-ended generation tasks.

Benchmark Datasets

Standardized test sets that let you compare your model's performance against baselines. Key benchmarks: GLUE and SuperGLUE (language understanding), SQuAD (question answering), WMT (machine translation).

Automated Metrics

Fast and cheap for iteration during development:

ROUGE (Recall-Oriented Understudy for Gisting Evaluation): measures overlap between generated summaries and reference summaries. Used for summarization and translation.

BLEU (Bilingual Evaluation Understudy): measures n-gram precision against reference translations. Primary metric for machine translation.

BERTScore: computes semantic similarity using BERT embeddings. Better than BLEU for capturing meaning even when exact wording differs.

The important caveat: these metrics measure specific quantitative properties. They do not always align with human judgment. Use them for rapid iteration, then validate the best candidates with human evaluation before shipping.

Stage 5: Deploy and Monitor

Passing evaluation does not mean the work is done. Production deployment requires monitoring for:

Model performance over time (outputs degrading as data distribution shifts)

Usage patterns and latency

Potential biases in outputs

Cost

Post-deployment, the loop continues. User feedback, usage data, and performance metrics feed back into the lifecycle. The model may need to be retrained, fine-tuned, or replaced as the application evolves.

Practical Takeaways

If you are building a generative AI application on AWS today, here is how to think about the five stages:

Define thoroughly before you write a single line of code. Know which metric (cost, time, quality, satisfaction, productivity) you are optimizing for.

Select a foundation model on Bedrock based on your use case requirements, not just what is popular. Claude 3 for text and vision, Titan for embeddings, Stable Diffusion for images.

Improve by starting at the bottom of the customization pyramid. Prompt engineering first. RAG if you need external knowledge. Fine-tuning only if you have domain data and prompt engineering is not enough. Training from scratch almost never.

Evaluate with a combination of automated metrics for iteration and human evaluation before any production deployment.

Monitor in production. The lifecycle is iterative because production data always reveals things that development environments do not.

The five stages are not a checklist. They are a loop. Understanding that is the difference between a demo and a production system.

Studying for the AWS AI Practitioner (AIF-C01) certification. Notes from the "Developing Generative AI Solutions" course.